Caching in RESTful Applications: How to Improve API Speed

In modern API-based systems, performance is a critical factor to ensure a good user experience. Each request to an API may involve database queries, complex calculations, or communication with other services. When traffic volume increases, these processes can generate significant latencies.

One of the most effective strategies to improve performance is cache implementation. Cache allows temporarily storing frequently requested responses or data, reducing the need to recalculate or query the database on each request.

In this article, we will explore the main challenges when implementing cache in RESTful APIs, as well as best practices to leverage it properly.

What is cache?

Cache is a temporary storage mechanism that saves frequently used data to avoid retrieving it each time from a primary source, such as a server or a database. This process reduces response time and improves the overall performance of web applications. Now, depending on where this cached data is stored, we can talk about client-side cache or server-side cache.

Common challenges when implementing cache in RESTful APIs

1. Stale data

One of the main challenges of cache is preventing users from receiving outdated information.

When an API caches a response and the data changes in the database, there is a risk that subsequent requests will continue receiving the old version. This can be problematic in systems where information changes frequently, such as e-commerce platforms or financial applications.

For example, if an API returns product information and the price changes, a poorly managed cache could continue showing the previous price for some time.

2. Defining which endpoints should use cache

Not all operations in an API should be cached.

Generally, GET operations are the ideal candidates because they retrieve information without modifying it. In contrast, POST, PUT, or DELETE operations usually modify data, so they require special cache invalidation strategies.

Identifying which endpoints truly benefit from cache is key to avoiding inconsistencies or unnecessary complexity.

3. Cache invalidation control

The famous problem in software engineering: when to invalidate the cache.

If invalidated too quickly, the benefit of cache is lost.

If invalidated too late, users could receive incorrect information.

Finding the right balance depends on the type of data and system behavior.

Practical solutions and best practices

1. Leverage HTTP cache headers

RESTful APIs can use the built-in HTTP caching mechanisms to improve performance without additional infrastructure.

Some important headers include:

Cache-Control

Allows defining how and how long a response can be stored.

ETag

Allows validating if a resource has changed since the last request.

Last-Modified

Indicates the last date a resource was updated.

With these headers, clients or proxies can reuse stored responses without needing to query the server again.

2. Implement in-memory or distributed cache

For high-traffic APIs, a common practice is to use dedicated cache systems such as:

- Redis

- Memcached

These systems allow storing responses or processed data in memory, significantly reducing API response times.

For example, an API that queries user information from a database can store the result in Redis for a few minutes, avoiding repeated database queries.

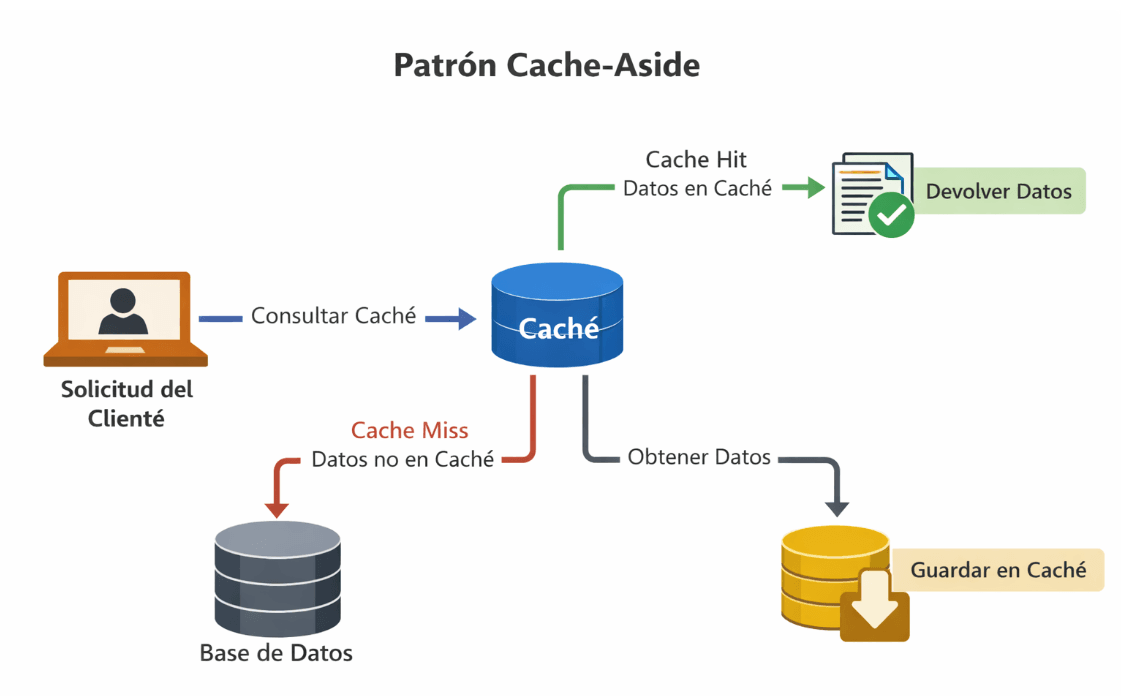

3. Use the Cache-Aside pattern

One of the most used patterns in APIs is Cache-Aside, which works as follows:

- The application receives a request.

- It first checks the cache.

- If the data is available (cache hit), it is returned immediately.

- If not (cache miss), it queries the database.

- Then it stores the data in the cache for future requests.

This pattern allows maintaining a balance between performance and control of the stored data.

4. Define appropriate expiration times (TTL)

TTL (Time-To-Live) determines how long data remains in the cache before being automatically removed.

Choosing an appropriate TTL depends on the type of information:

- Data that changes little: longer TTL

- Dynamic data: shorter TTL

This helps avoid stale information while maintaining the benefits of caching.

5. Monitor cache performance

The cache should be constantly monitored to ensure it is truly improving system performance.

Some important metrics include:

- Cache hit rate: percentage of requests served from the cache.

- API latency: response time of requests.

- Cache memory usage.

Monitoring tools allow identifying if the cache is configured correctly or if adjustments are needed.

Success stories in the use of cache and microservices

Case 1: Netflix and the optimization of its global system

Netflix, one of the main users of microservices-based architectures, widely uses the distributed cache system EVCache to handle billions of daily requests. Thanks to this approach, they have managed to maintain low latency even during traffic peaks, such as the release of new seasons of popular series.

The use of a distributed cache has not only allowed Netflix to scale its system globally but has also improved the user experience by significantly reducing load times.

Case 2: Twitter and data consistency

Twitter faces the challenge of maintaining real-time data consistency for billions of active users. To do this, they use a combination of cache systems and distributed databases, along with advanced cache invalidation strategies.

This combination allows them to ensure that users see updated content almost instantly, without sacrificing system performance.

Case 3: Shopify and scalability in e-commerce

Shopify, a leading e-commerce platform, uses cache to handle large volumes of data related to product catalogs, user information, and transactions. By implementing Redis as their main caching solution, Shopify has been able to scale its system to support thousands of online stores, maintaining a smooth user experience even during events like Black Friday.

Conclusion

Cache is a fundamental tool to improve the performance of RESTful APIs. By reducing repeated database queries and reusing previously generated responses, it is possible to significantly decrease latency and improve system scalability.

However, implementing cache correctly requires considering aspects such as data consistency, invalidation strategy, and continuous system monitoring.

By applying practices such as using HTTP headers, in-memory cache systems, patterns like Cache-Aside, and appropriate expiration times, development teams can build faster, more efficient, and scalable APIs.

In an ecosystem where APIs are the core of many modern applications, optimizing their performance through caching can make a big difference in the end-user experience.

👉 Is your architecture ready to scale without affecting performance?

At Kranio, we design robust, efficient, and high-traffic-ready solutions.

Contact us and let's take your system to the next level.

Previous Posts

CAG in LLMs: How to Reduce Latency and Costs in AI

Discover what CAG is and how it enhances speed, reduces costs, and improves consistency in LLMs. Learn when to use it and how to design efficient AI architectures.

Rate limiting: protect your API and prevent overloads

Control the traffic of your API with rate limiting. Enhance security, stability, and cost efficiency in your digital infrastructure.