Rate limiting: protect your API and prevent overloads

What is rate limiting and why is it important

In a digital world where online traffic grows exponentially and applications and APIs support thousands or even millions of requests per second, a fundamental need arises: efficiently managing this data flow and protecting system resources. This is where the role of rate limiting comes in. Understanding this concept can make a difference in the stability, security, and performance of your projects.

Below, we will explain what rate limiting is, how it works, and why its implementation is important.

What is rate limiting and why is it important?

Rate limiting is a technique that controls the number of requests a user, client, or system can make to a service within a defined period of time. For example, an API could be limited to accept a maximum of 100 requests per minute per user.

Why should you implement it?

- Protection against abuse: Prevents attacks like DDoS (denial of service attack) by limiting unwanted traffic.

- System stability: Helps ensure that your application's resources do not become saturated due to excessive traffic.

- Fairness among users: Guarantees that all users have fair access to available resources.

Using rate limiting not only improves the user experience but also protects your system's infrastructure.

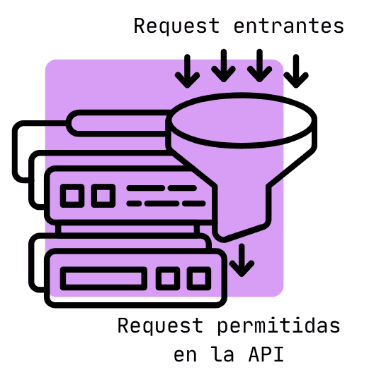

How does rate limiting work?

The operation of rate limiting is based on predefined rules that set limits for requests. These rules can be configured in various ways depending on the system's needs, but generally include parameters such as:

- Maximum number of requests: For example, "allow up to 100 requests per minute per user."

- Time interval: The period during which the limit applies.

- Identification criteria: It can be based on the user's IP address, an authentication token, or any other unique identifier.

When a user or client exceeds the established limit, the system can respond in different ways, such as:

- Rejecting additional requests with an error code (for example, HTTP 429: Too Many Requests).

- Applying a delay before processing new requests.

- Temporarily blocking the user or client.

This technique not only protects the system from overloads but also prevents misuse of resources.

Practical examples of rate limiting

To better understand how rate limiting is applied, let's look at some practical examples:

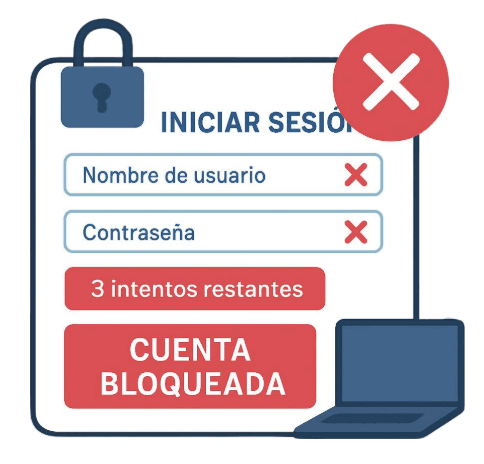

1. Limiting login attempts

One of the most common use cases of rate limiting is controlling login attempts in an application. For example, you can allow a user to make up to 5 login attempts within 10 minutes. If this limit is exceeded, the system can temporarily block the user or display an error message.

This is especially useful to prevent brute force attacks, where an attacker tries to guess passwords through multiple attempts.

That is why sometimes our account gets locked if we enter the wrong password, usually after three attempts.

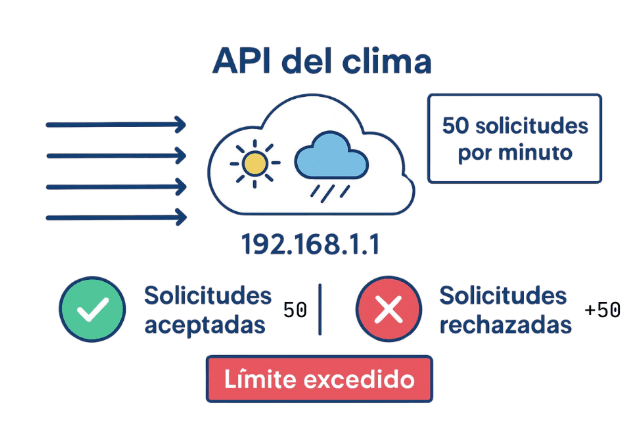

2. Managing requests in an API

Suppose you have a public API that provides weather data. To prevent a single user from monopolizing resources, you can implement a limit like "50 requests per minute per IP address." If someone tries to make more than 50 requests, the system will reject the additional ones until the allowed time interval passes.

This approach not only protects your infrastructure but also ensures a fair experience for all users.

3. Controlling web traffic on a site

On websites with high traffic volumes, rate limiting can be an essential tool to prevent overloads. You can limit the number of simultaneous requests a single user can make, preventing bots or automated scripts from affecting the site's performance.

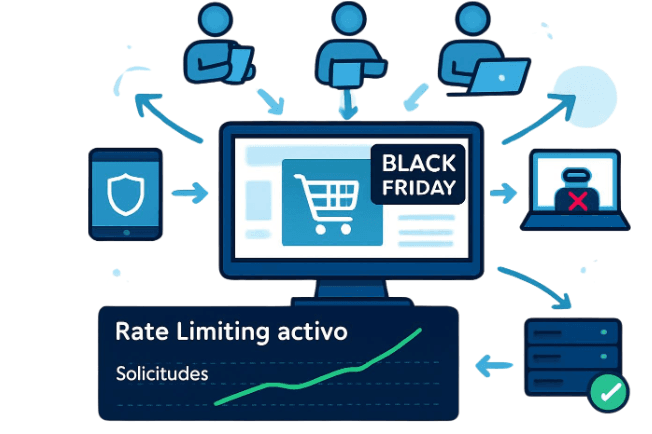

For example, there are applications that on special days like Black Friday have a high flow of requests, and controlling traffic prevents the application from crashing.

Why is rate limiting important?

The implementation of rate limiting is crucial for several key reasons:

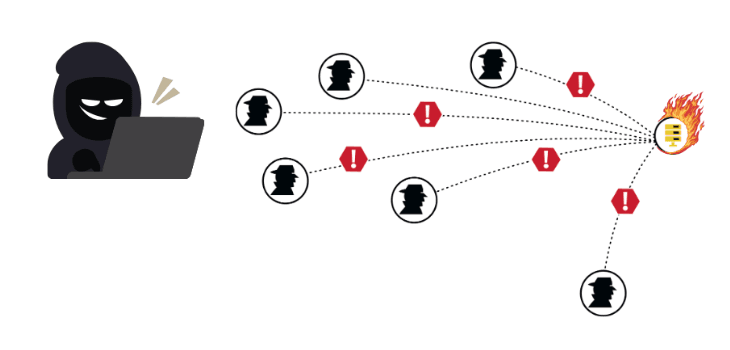

1. Security

Rate limiting helps protect systems against malicious attacks, such as denial of service (DoS) attacks and brute force attempts. By limiting the number of allowed requests, you significantly reduce an attacker's ability to exploit your resources.

2. System stability

An overloaded server can lead to downtime or application failures. With rate limiting, you can prevent excessive traffic from negatively affecting the stability of your system.

3. Better user experience

By ensuring that resources are distributed fairly and orderly, rate limiting contributes to a smoother experience for legitimate users. This is especially important in critical applications, such as financial services or e-commerce platforms.

4. Cost optimization

In cloud environments, where resources are billed based on usage, rate limiting can help you control costs by avoiding excessive resource consumption due to unexpected traffic spikes.

Conclusion

Rate limiting is an essential concept for any developer or system administrator looking to improve the security, stability, and performance of their applications and services. By implementing this technique, you not only protect your resources against abuse and attacks but also ensure a more efficient and satisfying experience for your users.

With the right tools and techniques, systems can be made more resilient and prepared to face the challenges of the modern digital world.

Previous Posts

Caching in RESTful Applications: How to Improve API Speed

Enhance the performance of your REST APIs with caching: reduce latency, optimize resources, and scale your architecture efficiently.

Google Apps Scripts: Automation and Efficiency within the Google Ecosystem

Automate tasks, connect Google Workspace, and enhance internal processes with Google Apps Script. An efficient solution for teams and businesses.