Augmented Coding vs. Vibe Coding

There is a question I ask myself more and more often. Not when I am writing code, but when I am reviewing it: which part of this was generated by a machine, and which part was decided by a person?

The question is not trivial. I work leading development teams at Kranio, where we build integrations for a wide variety of sectors: financial, logistics, retail, manufacturing, and more. These are contexts where a security error is not just a bug; it is an incident with regulatory, financial, or even legal consequences. In those types of contexts, the distinction between code that works and code that is secure is not an academic nuance. It is the line between a successful deploy and a breach.

I use AI tools daily. They are very useful and it would be dishonest to deny it. Now, there is a tension that is rarely addressed with the depth it deserves: the relationship between the developer’s experience using these tools and the security of what they produce together. This article explores that tension. Not to demonize AI nor to celebrate it uncritically, but to invite a conversation that I believe we need to have as an industry.

Code that works and code that is secure are not always the same

A few months ago I did a deliberate exercise. I took an integration scenario I know well: connecting a payment service with an enterprise messaging system, processing the message, and returning the confirmation. I asked three different assistants to generate the solution. All three delivered functional code, with reasonable structure.

And all three made the same mistakes. Hardcoded connection parameters that should come from environment variables, nomenclature disconnected from the business domain, and a superficial understanding of the hexagonal architecture we use in production.

No test failed. Everything was green. And someone without context of the real architecture would have approved it without hesitation.

That is the underlying problem: the assistants do not generate bad code. They generate code that works, and that is enough to pass filters that should be more rigorous.

45%

of the code generated by more than 100 language models contained OWASP Top 10 vulnerabilities. In Java, the failure rate reached 72%. Defenses against XSS failed in 86% of cases. (Veracode GenAI Code Security Report)

LLMs for development are a rapidly evolving species, so these figures could drop drastically in a few weeks. But that is also part of what challenges us: to deeply understand what is being implemented, to be able to direct it correctly, and to critique it sharply.

The difference between an experienced developer and one who is just starting, using the same tool, is not in how quickly they accept suggestions. It is in the questions they ask themselves before accepting them.

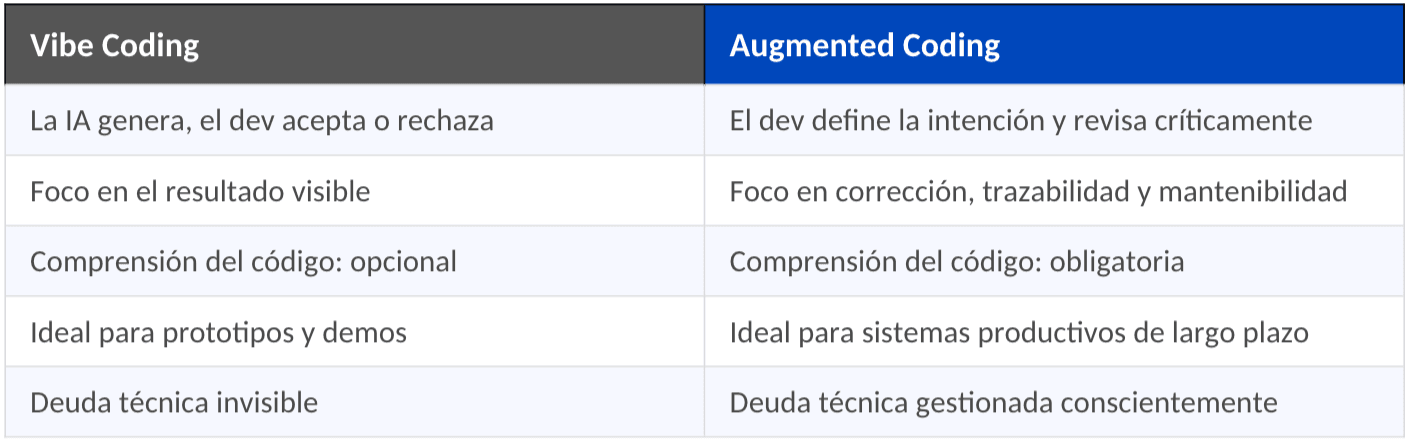

Vibe Coding vs. Augmented Coding: more than a matter of semantics

There are two ways to work with these tools. Kent Beck names them well: vibe coding and augmented coding. The distinction is not philosophical; it has direct consequences on the quality and security of what is built.

Vibe coding describes a way of programming where the developer almost completely delegates code generation to a language model. The intention is expressed in natural language, the suggestion is accepted, it is executed, the error is observed, and the process repeats. The focus is on the visible result. Does it work? Done.

Augmented coding starts from a different premise: AI amplifies the engineer’s capability, but does not replace their judgment. The developer remains responsible for every architectural decision, every tradeoff, every line that reaches production.

Faster does not always mean better: the 70% paradox

Here the conversation gets uncomfortable, especially for those of us who have enthusiastically adopted these tools.

Addy Osmani, from the Chrome team at Google, has a name for this phenomenon: the 70% problem. Assistants quickly deliver the scaffolding, the obvious patterns, the boilerplate. The initial 70%. But the remaining 30% still requires the same effort as always. That’s where security, architectural decisions, and production edge cases live. And now you also have to verify what the machine generated.

For a developer with little experience, that 70% feels magical. For one with a long career, the remaining 30% is often slower than having written it from scratch.

And it is in that 30% where it is defined whether the code is merely functional or truly secure.

Beck goes further: his central argument is that TDD is the essential safety net when working with AI agents, because they introduce regressions that only rigorous tests detect in time. If a team generates code with AI but does not write tests first, it is building on sand.

The silent risk: what tools can erode

There is an aspect of this conversation that worries me more than immediate vulnerabilities, and it has to do with something we value highly as developers: our ability to understand what we build.

Anthropic published in January 2026 a controlled study with 52 engineers learning a new Python library. Those who used AI assistance scored 17% less on comprehension tests, with debugging skills being the most affected. But the most revealing was not the number. It was the pattern they found:

17%

less comprehension in developers who used AI passively. However, those who generated code and studied it thoroughly, alternated between code and conceptual explanations, or asked design questions scored over 65% in comprehension. The how matters more than the if. (Anthropic, January 2026)

They identified six ways of interacting with AI. Three lead to scores below 40%: full delegation, progressive dependency, and using AI as a crutch for debugging. The other three exceed 65%: generating and studying thoroughly, alternating code with explanations, and asking conceptual questions.

This is not an argument against AI. It is an argument in favor of using it intentionally.

And there is a broader dimension that deserves attention. Entry-level development job offers dropped 60% between 2022 and 2024. If we stop training juniors because AI does their work, who will have the necessary experience to supervise these tools in five or ten years? This is not a rhetorical question. It is a real challenge for the sustainability of our teams.

What we are learning to do: principles from practice

I do not intend to give recipes. Each context has its particularities. But there are principles that in our experience work and that evidence supports.

Prevent before generating

Tools like Claude Code allow instruction files where you can write down: do not hardcode secrets, do not implement retries if that is handled by the infrastructure, URLs come from external configuration. That is the first line of defense. The second are pre-commit hooks that scan for secrets before they reach the repository, and static analysis in the CI pipeline that detects vulnerabilities before the merge. In teams that use AI intensively, this stops being good practice and becomes a necessity.

Review with more rigor, not less

ThoughtWorks placed complacency with AI-generated code in its Hold category, the strongest warning of the Tech Radar, for three consecutive editions. The reason is intuitive: when we know the code was generated by a machine, we give it less scrutiny without realizing it. The opposite habit requires deliberate effort.

Invest in understanding, not just production

This is, for me, the most important principle. The successful patterns from the Anthropic study have something in common: the developer does not delegate their understanding. They generate, study, ask, and only then integrate.

I have started applying this in my own workflow: when an AI tool generates an integration solution for me, before accepting it I force myself to explain why it works. If I cannot, I discard it. That attitude of active curiosity, of not accepting what is not understood, is what separates productive use of AI from use that erodes skills.

Experience as a Pillar of Security

Martin Fowler compared the impact of LLMs to the transition from assembler to high-level languages. The analogy is useful, though incomplete. When we moved from assembler to C, we knew what we were abstracting. With generative AI, many teams are abstracting things they never understood in the first place.

The next time you see AI-generated code that passes all the tests and looks clean, it's worth asking the questions the machine cannot ask itself:

- Where do those credentials come from?

- Who handles the retries?

- Does this logic belong here or in the infrastructure?

- Do we understand what we are approving?

Those questions are not generated by any model. They are generated by experience. And that experience is what makes a team secure, with or without artificial intelligence.

Conclusion

AI writes code.

We decide if it is secure.

That responsibility is not delegated.

To Deepen

- Veracode GenAI Code Security Report — Security assessment of 100+ models generating code

- OpenSSF: Security-Focused Guide for AI Code Assistants — Ready-to-use instruction templates

- Anthropic: How AI Assistance Impacts Coding Skills — The study on the 6 interaction patterns

- ThoughtWorks Tech Radar: Complacency with AI-Generated Code — Why it is on 'Hold' since 2024

- OWASP Top 10 for LLM Applications 2025 — The top 10 risks when using LLMs

- Kent Beck: Augmented Coding — Augmented Coding: Beyond the Vibes

Previous Posts

CAG in LLMs: How to Reduce Latency and Costs in AI

Discover what CAG is and how it enhances speed, reduces costs, and improves consistency in LLMs. Learn when to use it and how to design efficient AI architectures.

Rate limiting: protect your API and prevent overloads

Control the traffic of your API with rate limiting. Enhance security, stability, and cost efficiency in your digital infrastructure.